Data wrangling: definition, steps, tools

Data wrangling might sound like quite the intimidating tech term on the surface yet anyone can develop an understanding of this process with a little bit of time and effort. Data wrangling, also known as data munging, is part of the larger process of “data preparation”. Data wrangling is the process performed to collect, select, re-structure, enrich and ultimately transform information with the aim of answering a specific question. This overarching question is usually highly analytical.

Data wrangling chews up a considerable amount of time. For example, it’s a well-known fact that data scientist are spending much of their time in data preparation activities (more than 80% of their time, actually) and a large part of these data preparation activities is focused on“Data wrangling”.

Summary

- A brief explanation of data wrangling

- Why data wrangling is so important

- Data wrangling steps

- The challenges of data wrangling

- Techniques et tools for data wrangling

- Is data wrangling worth the trouble ?

A Brief Explanation of Data Wrangling

Data wrangling converts raw data to prepare it for subsequent analysis by data wranglers, also referred to as mungers. This means data wrangling is the work performed on data before it is broken down through in-depth analysis. Data wrangling encompasses weighing the quality of data with context and converting it into the necessary format for subsequent analysis.

If you’re wondering if data wrangling is worth it, try to think of this process as similar to the foundations needed to support a house.

Why Data Wrangling is so Important

Wrangling generates structured information that can be used to engage in analytical activities: create an Analytic Base Table (or ABT for short), perform time series analysis, create KPI’s, create BI dashboards, create predictive models,etc.. Generating such structured datasets is inherently challenging. However, if the time necessary for accurate and comprehensive data wrangling is invested, it sets the solid foundations required to perform meaningful analytical tasks.

Data Wrangling Steps

Make the commitment to performing data wrangling and you will be that much closer to more effective and helpful data analysis. Though data wrangling is somewhat tedious, it is worth the effort. Data wrangling begins with data discovery in which a general familiarity with the data is established. Data structuring follows in which raw data is collected. Though this data lacks structure, it will eventually be restructured in accordance with the business’s selected analytical model.

The next step is data cleaning in which raw data laden with errors is corrected prior to the shifting of the data to the subsequent stage. Cleaning is centered on addressing outliers, performing corrections and eliminating the bad data. Data enriching is next. This is an opportunity to augment data or embellish it as desired.

Once the data is enriched, it is time to validate it. Validating data brings potential quality issues to the surface so they can be addressed and transformed as necessary. The easiest and fastest way to validate data is to use an auto-ML tool. Indeed, during almost any predictive modeling activities, all the inconsistencies in the data directly surface out and it’s then easy and straightforward to correct them upstream. With an auto-ml tool, you can validate all your ABT with a few mouse clicks.

The Challenges of Data Wrangling

When you engage in data wrangling activities, you are manipulating your data with the overarching objective of answering a specific business-related question. This means that the transformation applied on your data will be guided by the very nature of the business questions that you are attempting to solve. This also means that, to engage in meaningful data wrangling activities, a solid comprehension of the analyzed business process is required. This means that, most of the time, you’ll find that the “business-analysts” are the profiles that are the most qualified to perform data wrangling activities because they are the ones that “know your business the best” and these are the ones that also “known all the little subtilities of your data sources” perfectly well.

The “business-analysts” might be the best profiles to get the best results out of your data but, at the same time, unfortunately, these profiles are usually code-adverse and performing complex data manipulation can quickly become a challenge for them. So, quite often, the real challenge of the Data wranglers are just simply the barbaric codes and the incomprehensible procedures that the Data wranglers need to write to get the data in the “correct shape”. If you already ended-up writing SQL commands that completely fills-up a height of 3 screens, you know what I mean. Or, even worse, if you ended-up writing Excel-VBA-macro-codes that auto-generates incomprehensible machine-generated SQL codes, you’ll also understand what I mean.

Hopefully this is now something from the past since there now exists specialized data wrangling tools that permit the creation of any data transformations, whatever their complexity, without writinga single line of code. At last, we can finally free the business-analysts from the shackles of painful code-intensive sessions and get better, more meaningful analytical results, in a much easier and faster way. Ultimately, the new generation of data wrangling tools have been created to relieve the business-analysts from all the pain and suffering oftheir daily routines in the past.

Actually, with the proper modern tools, data wrangling almost becomes fun because it’s so fast and easy that some enjoyment is obtainedby discovering almost instantaneous interesting insights into your data.

Techniques and Tools for Data Wrangling

Industry insiders insist the vast majority of analysts invest the bulk of their work hours performing data wrangling instead of actually analyzing data. Historically, those who performed data wrangling were highly technical professionals skilled in statistical languages such as Python and R.

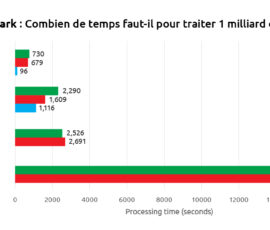

Data wrangling is performed with nuanced techniques and tools. Examples of generic purpose tools that can be used to do data-wrangling occasionally include VBA code in Microsoft Excel spreadsheets, R code, Python code and Perl code. OpenRefine, a more advanced version of Microsoft Excel, is also used. Typically, when using R, you’ll spend time with these libraries : JSOnline (useful for parsing), Purrr (helps with list function operations), DPlyr (data manipulation framing tool). Nowadays, there exists specialized tools that focus almost entirely on data wrangling: Anatella (part of the TIMi Suite), Alteryx, Altair Monarch, etc.

Is Data Wrangling Worth the Trouble?

If you’re wondering if data wrangling is worth it, try to think of this process as similar to the foundations needed to support a house. The structure above will only be useful if a solid foundation is in place. In short, your analytical deliveries (kpi’s, models, etc;) will not prove helpful if they are based on relevant and sufficiently large data obtained through a data wrangling process.

If you invest time in a good data wrangling process (collecting, cleaning, enriching, restructuring information from several sources), you will obtain the highly accurate and actionable data necessary to make truly well-informed decisions.

Though data wrangling is not easy, it is worth the effort because it allows to succeed in all your analytical endeavors. These analytical initiatives have the potential to lead to important discoveries that can prove so valuable that they potentially redefine the way you conduct your business.

Source: IntoTheMind