pipeline. It must be capable of processing not just a single query, but millions of lines of data—reliably, securely, and automatically.

That is precisely the goal of the new Anatella update (version 4.06): to turn generative AI into just another industrial ETL component.

Here’s what this means in practical terms for your data flows.

1. Sovereignty First: Cloud or On-Premises: The Choice Is Yours

The primary obstacle to AI adoption in businesses is security. Sending customer data or confidential documents to OpenAI’s servers in the United States is often out of the question.

Anatella breaks down this barrier by offering a hybrid approach:

- Remote Mode (Cloud) : Connect to standard APIs (OpenAI, etc.) for quick tests or to process public data.

- Local Mode (On-Premise) : CThis is the key strength of this update. You can run open-source models (GPT, Llama, Ministral, Gemma, etc.) directly on your infrastructure via the native integration of Llama.cpp.

What’s in it for you? Your data never leaves your server (total sovereignity) . Plus, by using your own GPU/CPU, you eliminate the variable costs associated with API calls.

2. From “Blah-Blah” to Structured Data (Industrialization)

The problem with LLMs is that they’re verbose. In an ETL process, you don’t need fluff—you need clean JSON.

Anatella now includes two tools to harness the full power of AI:

- The “System” Prompt: To provide strict instructions to the model (e.g., “You are a data extractor; respond only in JSON”).

- The JSON schema: You define a strict grammar. The LLM is required to respond in this format (e.g., an object containing “Name”, ‘Amount’, “Date”). It’s now impossible to get an output that does not match the schema.

What’s in it for you? You can convert free-form text (emails, PDFs, comments) into structured tables that can be used immediately by the subsequent boxes in your graph.

3. Use Cases: What Can You Automate Today?

This new “box” opens the door to scenarios that were previously very complex to code:

- Intelligent Document Processing (IDP): Attach the contents of a file (PDF, contract) to your prompt. The LLM can then analyze the document to extract specific information, such as IBANs or specific clauses.

- Contextual data cleansing: Use AI to standardize poorly formatted addresses, infer gender based on a first name, or categorize products.

- Synthetic data generation: Create realistic test datasets for your development environments.

4. For Experts: JavaScript Scripting and Control Loops

For simple cases, the Simplified Mode is enough: one input column (Prompt) and one output column (Response).

But for data engineers, the JS Script Mode offers complete control. You can write JavaScript code to interact with the LLM model in a more sophisticated way :

- Validation loops: Ask the LLM for its confidence level. If confidence is low, write a loop to rephrase the question and automatically query the model again.

- Managed memory: Use the context (previous exchanges) to create conversations that span multiple iterations.

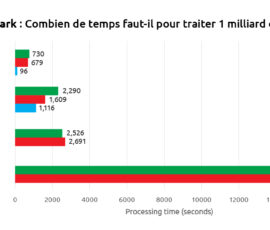

5. Performance: TIMi Efficiency Applied to AI

Running AI models locally consumes video memory (VRAM). True to its philosophy of optimization, Anatella intelligently manages server resources:

- Automatic Management: The “Llama.exe” server starts when needed and stops to free up memory.

- CPU & GPU Usage: No GPU? No problem. For lightweight models (< 5 billion parameters), the CPU is enough. The graphics card (GPU) serves as a performance booster, not a deal-breaker.

- Supported quantization: Anatella uses compressed formats (Q4 or Q6). This makes it possible to run massive, high-performance models on a standard consumer graphics card (costing around €500), while drastically reducing memory consumption.

Conclusion

Generative AI is no longer a mystery reserved for Python-speaking data scientists. With this update, it becomes a robust component of your ETL toolkit. Whether you need to summarize, extract, or generate data, you can now do it at scale, with complete security.

For more information, this new feature is documented in detail in the section 5.9.12 of the AnatellaQuickGuide.pdf. We also recommend reading section 5.1.8, which is packed with useful information on how to use LLMs efficiently.

Want to give it a try? This feature includes automated installation of all the required components. Download the latest version of Anatella and launch your first local LLM model in just a few clicks.