A gentle Introduction to the TIMi Suite

We strongly encourage you to follow the above 8 videos about Anatella because they’ll greatly help you in learning the “TIMi Suite”. The other videos here below are only useful if you have very specific needs: i.e. if you have a machine learning or clustering exercise to do.

- Segmentation theory: Theoretical Introduction to the PCA technique – part 1.

- Segmentation theory: Theoretical Introduction to the PCA technique – part 2

- A demonstration of the multivariate segmentation engine of Stardust – part 1.

- A demonstration of the multivariate segmentation engine of Stardust – part 2.

BUSINESS INTELLIGENCE BASICS

In this guide, we will see which functionnalities each type of analytic solution has to offer,

how they compare to each other and how to make the right choice for your needs.

Classical solutions for Business-Intelligence can be roughly categorized as followed:

Data integration: ETL

The main objective of ETL tools (Extract, Transform, Load) is to gather the content of various databases or operational systems across an organization and centralize all data in one place, named “data warehouse”.

Read moreData warehousing

A data warehouse is a centralized place where you can find all the data of your company. Data Warehouse software are very often simple database: Oracle, Teradata, SQL Server, MySQL,…

Read moreReporting & BI

Reporting software (i.e. BI tools – Business Intelligencetools) allow you to create charts and graphs about the evolution of different KPI (Key Performance Indexes) that characterizes your business.

Read moreCRM softwares

The CRM acronym stands for “Customer Relationship Management”. This category covers Operational CRM and Analytical CRM softwares.

Read moreAdvanced Analytics: 2 different approaches

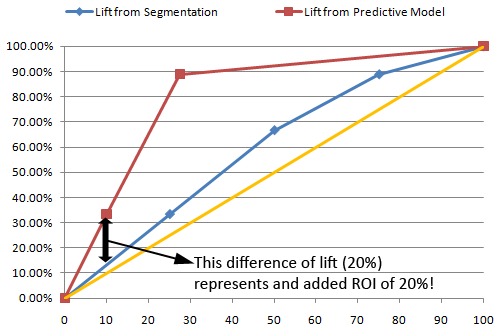

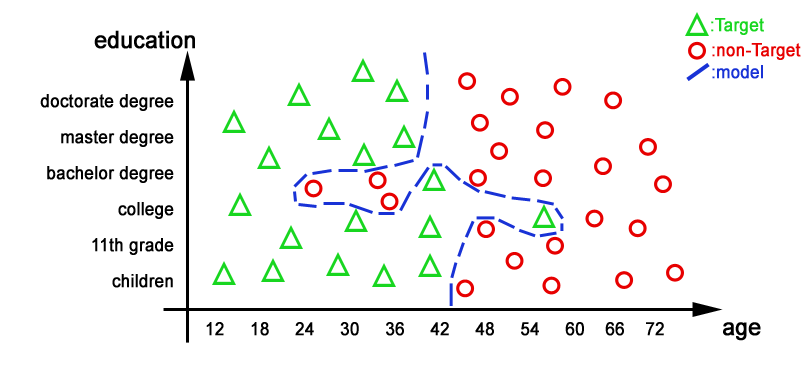

Analytical CRM can be classified in 2 categories: Analytical CRM tools based on segmentation techniques and Analytical CRM tools based on predictive techniques. By its very nature, segmentation is a technique well-adapted for exploratory work. In opposition, predictive analytics is discriminatory in nature. Analytical CRM tools based on predictive techniques usually generates a higher ROI for marketing campaigns.

Read moreOf the utility of the test dataset

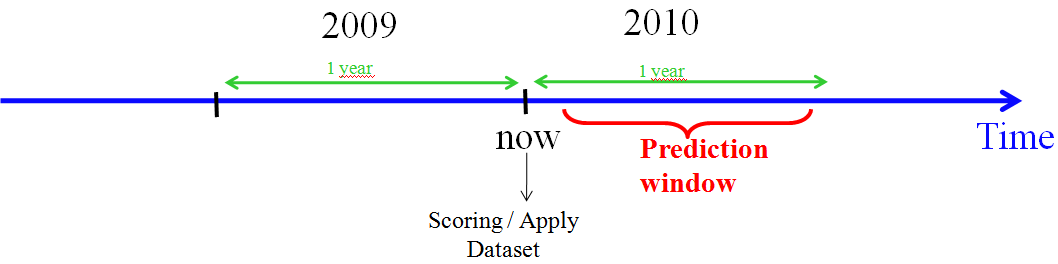

When comparing the accuracy of 2 different predictive models to know which predictive model is the best one (the one with the highest accuracy & the highest ROI), you must compare ONLY the “lift curves computed on the TEST dataset” and if possible, use always the same TEST dataset.

Read moreNot all lifts are born equal

When we are constructing a model that makes no mistakes on the “training dataset”, we obtain a model that doesn’t perform well on unseen data. The “generalization ability” of our model is poor. The predictive model is using some information there were in reality noises. This phenomenon is named “Over-fitting”. A model that “overfits” the data can have an accuracy of 100% when applied on the learning set.

Read more