The Github repository with the Anatella graphs and the scala codes used in the video:

https://github.com/Kranf99/TPC-H-Benchmarck-Anatella-Spark

About Hadoop Spark and the Cloud

The Hadoop ecosystem is composed of many different tools: ambadri, hbase, hive, sqoop,pig, zookeeper, oozie, flume,etc.

But one tool is more well-known than any other: Spark.

When somebody speaks about Hadoop, 99% of the time, he will be talking about Spark.

Spark is really the “heart” of the Hadoop ecosystem.

Spark is mostly used as a batch ETL tool.

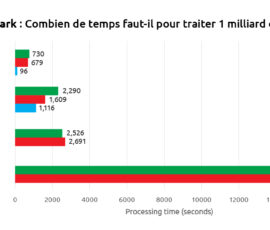

The main selling point of Spark is its speed: it is supposed to execute fast (at least, this is what we see when we read the marketing propaganda available on the Spark website).

The youtube video given below explains that one machine equipped with Anatella is faster than a Spark cluster composed of more than 300 machines/nodes.

Why is Spark so slow? This is because of a mathematical law named the “Amdhal’s Law”.

You’ll find here a youtube video that explains:

* the Amdhal’s Law and the “incompressible time” of distributed computation engines.

* why you shouldn’t use Spark for ETL processes.

* why it’s better to avoid using “cloud solutions” (Amazon, Azure) for “data science” projects.

The presentation used in the video:

http://download.timi.eu/docs/TIMi_vs_Spark.pdf

A quick one-page executive summary about the video:

http://download.timi.eu/docs/Spark_vs_TIMi_Executive_Summary.pdf

A white paper that summarizes the findings explained in the video:

http://download.timi.eu/docs/Spark_vs_TIMi_technical_white_paper.pdf

To see the video from Mister Frédéric Pierucci:

https://youtu.be/dejeVuL9-7c

The Github repository with the Anatella graphs and the scala codes used in the video:

https://github.com/Kranf99/TPC-H-Benchmarck-Anatella-Spark