UPDATE: This article was initially published in October 2019. I am republishing it now because it’s still 100% relevant for 2022. I can’t believe that people are still trying to promote anything related to “Data Vault” in 2022? ![]()

![]() That’s crazy! 😳 😵

That’s crazy! 😳 😵

Data vaulting: from a bad idea to inefficient implementations

An efficient data management mechanism should have two main characteristics: operational efficiency (it must run faster and with less resources than those it aims to replace) and structural clarity (it must be straightforward to access, understand, and query).

As IT data manager, you know you sometimes need to put more emphasis on one aspect (at the cost of hurting the other), and most decisions you take will follow one of those two axis: either you want the organization to process the data faster (and get more results, and you brace your team for a bit of increased complexity), or you want data management to be easier (and run a lean IT department). A third aspect (corollary of the second one) should also obsess you: independence from any third party when it comes to the ownership and the maintainability of your data. A good system (and they exist!) would actually reconcile them all.

With this in mind, let’s explore how data vaulting does the exact opposite:

- The SQL code to query the data becomes so complex that you cannot write it by yourself: you need an external tool to actually be able to write it.

You should know that one reason behind the global & universal adoption of SQL as the only language currently available to query data in databases is that SQL is universally recognized to be a language that is simple to use. So simple, in fact, that nearly anybody can use it to extract knowledge and value out of your data. “Data vault” destroys all that by forcing you to create SQL commands that are impossible to understand. - A consequence of the increased complexity of the SQL code is that even the simplest queries are difficult to write, maintain & support. Furthermore, the “complex” data transformations are just becoming impossible to do.

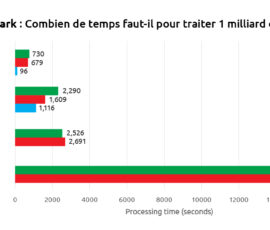

- With Data Vault, the run time is much slower than what you would get on any other data modeling methodology, to the point that your database freezes and stops responding altogether.

- The implementation is longer and more expensive than any other DW implementation (Here is a quote from the comments of Dr. John Tunnicliffe about this subject: “I worked on a DataVault project for a year. A team of 40 trying to make an existing DataVault fit for purpose, when in reality starting again from scratch using another technology would have been cheaper and more beneficial”).

- The storage space is typically multiplied by three. This means that, if you use a database in the cloud, the data vault methodology will also, basically, multiply by three your operating costs.

- With its “import data fast” and its “analyze data slowly” properties, Data Vault appears to be an open invitation to practice the “garbage principle”: i.e. store all data indifferently, consume a lot of resources and disk space for nothing, document nothing, never ever use the imported “garbage” data.

- Data Vault increases dependency on third party for deployment and maintenance (i.e. more precisely: External consultancy company takes your data hostage)

- Data Vault prevents the adoption of any analytical culture in your company.

How did we reach these conclusions?

Everything started one year ago: We had our first business meeting with some technical “guru” of Data vault. …and it was quite funny! These “gurus” explained us that they used the “data vault” way-of-thinking to design a data base used as the backend of a small application (to manage some employees) and then they proceeded to show us one data transformation (i.e. one SQL command) that was used inside their application.

This data transformation was quite simple: i.e. using a graphical ETL tool (such as Anatella), you could design this very simple&straightforward data transformation using a few “boxes” (around 5 boxes).

But then, these “data vault” gurus showed us the SQL command that was actually used in their application: The SQL command was literally filling 3 full computer screens without any indentation or spaces! Aaaargh!

Furthermore, this SQL command was so complex that it was practically impossible to write “by hand”: They actually used a tool to create it. When the guy showed me, for the first time, this very loooooooong SQL command, we were all laughing, internally, thinking it was a good joke… Unfortunately, this was not…

If this 3-screen-long SQL command is equivalent to a data transformation that is composed of 5 “boxes”, then what’s happening if you want to create a SQL command that is equivalent to a data transformation that involves *more* than 5 “boxes”? Will it be 6-screen-long SQL command? What an abomination! That will be impossible to design, impossible to maintain, impossible to do anything! …but, strangely, the “data vault” gurus were not shocked and not even concerned by that?

As you can expect, our business meeting with the “data vault” gurus did not give any results and we quickly went away without giving any more thought about this, obviously, totally flawed technology. We were all laughing and all thinking: “Nobody with a healthy mind will never use such an abomination”.

…But we were wrong, because, a few months later, we found some consultants installing a “data vault” oriented database at one of our customer site? So, it got me interested in the technology again. Maybe, I was misled by the first example that I saw? Maybe, this was not so bad, after all?

You should know that I am mostly interested in analytical projects (BI, KPI creation, ML, AI, data mining). For such kind of project, it’s very important to have a fast data manipulation tool: This allows you to explore your data faster and, more importantly, it allows you to find quickly the “golden egg” in your data. But, this “data vault way” is not only giving me headaches (and slowing down my brain), it’s also *EXTREMELY* slow at run-time! More precisely: The one thing that you don’t want to execute inside a database is a big join between 2 large tables (data base engines are very bad at that). …and the “data vault way” of doing things is forcing you to do *many* big joins ALL THE TIME! This results in extremely slow processing speed, on *ANY* infrastructure size. In particular, even on large, distributed infrastructures, you’ll also get very bad running-time because distributed infrastructures are very bad at joining large tables. So, I was curious to see if other people had the same experience as me with this “data vault” technology. Thus, I quickly did a “google search” and it lead me to this interesting LinkedIn page:

https://www.linkedin.com/pulse/data-vault-good-bad-ugly-dmytro-andriychenko/

On this LinkedIn page, the author writes: “It (“data vault”) favours performance of data loading and maintainability of the solution over analytical usability… development and maintenance are likely to be difficult to justify to stakeholders.” ..and it is really an understatement! ..but the most interesting parts of this webpage are made by the different practitioners, at the bottom, in the “comment section”!

Some comments about

The speed of Data Vault

Here are some comments from different practitioners about the speed of DV (Data Vault).

- Comments from Thomas Kejser (https://www.linkedin.com/in/thomaskejser/)

Since DV is forcing you to create many joins between different tables, Thomas Kejser writes:

“The joining becomes problematic when you have data that no longer fits memory or when the join trees start to grow beyond 10 or so joins (this happens all the time when using DV!). At that point, your query optimizer is running out of steam and the risk of getting bad query plans goes up dramatically (this means that the database engine will be stuck or run forever). And that is of course the problem with DV in a nutshell: if you start off small … and grow the warehouse …, you have effectively shot yourself in the foot with a DV model.

…Additionally, you are typically forcing the (database query) optimizer to perform range scans and loop joins instead of key-to-key hash joins when extracting data out of a vault like this. Here, you are throwing away another 3x performance.”

Later, he writes:

“I would add … that Data Vault has several fundamental design flaws that unfortunately display its creator’s ignorance of how modern database engines work.”

The data model inherently generates joins of the form: a JOIN b ON a.key = b.key AND a.date BETWEEN b.date_from and b.date_from. It is very difficult for query optimizers to estimate the cardinality of these joins – leading to consistently bad query plans (this means that the database engine will be stuck or run forever)….

Adding to the pain – more tables in the model (as you correctly observe – even more than 3NF) – generate larger join trees for queries – which again leads to poor query plans (read: the database gets stuck again). Correct query planning is – I am sure you aware – an NP complete problem. Making the search space greater by adding more joins – greatly increases the likelihood that you will get terrible query plans and poor performance

It is also very unclear what you mean by data vault being particularly good for MPP (MPP=massively parallel computing). In fact, Data Vault is particularly terrible for MPP. To achieve the great performance and easy maintenance in MPP – it is important that large joins are co-located. i.e. it should generally be the case that when two large tables are joined – the table are joined on a key that is distributed (typically using a hash) the same way on both sides of the join. Using a highly normalized model makes this difficult – often impossible – to achieve. You could argue that Data Vault is in some way good for MPP – because it is good for MPP vendors. DV drives up the cost of the hardware you need to run your database – requiring larger, more complex clusters, to manage.

Finally, the meta question is what problem it is you are trying to address? … using a data model that consistently leads to terrible performance where it matters the most (namely in read queries) – just makes the issue worse.”

- Comments from Dr. John Tunnicliffe (https://www.linkedin.com/in/drjohntunnicliffe/ )

Dr. John Tunnicliffe writes:

“… another fundamental flaw in the DataVault approach is that, if practiced as outlined by its creators, data is divided up for no good reason into lots of tiny, meaningless tables that clutter the schematic and detract from query performance. For example, in the project I have recently left the business were maintaining a single 2,500 row and 12-column wide table …as the data underpinned every single business rule in the system.

However, in order to meet the ludicrous ‘Data Vault design principles’, this single table was shredded into 18 tables (sick!) which were a combination of hubs, sats and links.

Needless to say, to apply any business rule in the system, all 18 tables had to be joined back together to recreate the original 2,500 row and 12-column wide data set before the rules could be applied. Somehow, the ‘Data Vault architects’ on the project could not see the problem with this. The problem is performance! Combining 18 tables using date range queries and non-enforced foreign key relationships means the query optimizer has no chance of calculating a good query plan (this means that the database engine will be stuck or run forever). Of course, the organization first threw hardware at the problem (top end machines with 1.5 terabytes of memory!) and subsequently blamed the poor performance on the database engine. Of course, they did not want to admit that the fundamental problem was bad database design caused by selecting a very, very bad design methodology: the DV methodology. As Thomas describes it, ‘DataVault is an anti-pattern’.“

Later, Dr. John Tunnicliffe writes:

“In my view DataVault suffers from fundamental design flaws as it completely ignores the platform it is designed for: i.e. the relational database engine (RDBMS). This is because DataVault insists everything is a many-to-many relationship AND worse, that NO foreign key relationships should be enforced AND worse still, joins between tables are based on date ranges (load date, end date). Relational database engines rely on enforced foreign key relationships to optimize a query. If none exist, it cannot calculate how many rows will be on each side of a join. Using date range queries to join tables makes things even worse as any index on the table cannot be used. The result: every query becomes a table scan where every row of data in the table has to be read to satisfy a query. This means many gigabytes of data are read from disk for each and EVERY query (this means terribly long running times for all queries!). There is NO WAY to optimize a query against a DataVault because the table design is fundamentally broken. So a set of views on top of this incredibly complex schema will not return the data to the end user in anything close to acceptable time frames. DataVault gurus will tell you that this is where “pits and bridges” come in (i.e. temp tables used to materialize the data). However, in reality “pits and bridges” are just sticking plasters over the gaping wound caused by DataVault design principles. …Better to drop any notion that DataVault will somehow offer you something better than traditional database design techniques.”

Later, Dr. John Tunnicliffe writes:

“DataVault is proven to be terrible when it comes to extracting data and making it useful to the business for analytics and reporting. Ultimately it does not meet today’s needs. Yes, it looks initially attractive. Some kind of intellectual beauty. But as it ignores the physical realities of the RDBMS (RDBMS=data base engines) and therefore is not fit for purpose… I worked on a DataVault project for a year. A team of 40 trying to make an existing DataVault fit for purpose, when in reality starting again from scratch (using another technology) would have been cheaper and more beneficial. So much for the “flexibility” of DataVault!”

- Comments from Vincent R (https://www.linkedin.com/in/vrainardi/ )

Vincent R. writes:

“In my view, Data Vault approach is trying to solve data modelling in too much technical way, to the point that it wastes our time and money. I can confirm your finding that the read performance is an issue, and that DV approach requires too many tables (so much more than necessary), to the point that an automated ETL solution is required. I would advise against using DV approach. … DV, is costly and even though it keeps us in the job, it does not give the business a good value for money.”

Some comments about

The space consumption of Data Vault

Here are some comments from different practitioners about the space consumption of DV (Data Vault):

- Comments from Thomas Kejser (https://www.linkedin.com/in/thomaskejser/)

Since DV is forcing you to create many unnecessary tables (e.g. SATS, PIT and BRIDGE tables), Thomas Kejser writes:

“The SATS table contain the same data as the PIT and BRIDGE tables right? So that’s redundant isn’t it? This is particularly expensive if you are modeling something that will eventually turn into a fact table. I think we agree that direct SAT joins are impractical because they mess with the query optimizer? I think we also agree that loading data that changes over time has some intrinsic complexity. With a dimensional model, you build the ETL in such a way that you hit the final result in one go. With Vault, you take an additional step (landing in the vault). Additionally, you have to maintain special tables just to join effectively, and you have to model the source in a way that isn’t the way the data will be consumed. IF you need to reload the warehouse completely from scratch – your bridge/PIT tables aren’t going to be of much use anyway (unless you’ve wasted an extraordinary amount of storage to hold them). So, you hold at least 2 extra, unnecessary copies of all the data: 1) The vault itself and 2) PIT/Links. I understand that you can auto generate a lot of the ETL code (as you can in a dimensional model) – but how are extra copies of data, and additional data model more “agile” than just doing what you set out to do in the first place?”

Some comments about

The database technologies linked to Data Vault

Here are some comments from different practitioners about the future of the database technologies linked to DV (Data Vault):

- Comments from Thomas Kejser (https://www.linkedin.com/in/thomaskejser/)

“At some point in the comments, some people pointed out that, in the near future, there will be some new hardware (still to be invented) that will be so efficient that the many “joins” (that DV is forcing you to compute all the time) won’t matter so much anymore. To this comment, Thomas Kejser responds:

The last 5-10 years has seen a dramatic shift in the bottlenecks of databases. We have not only reached the limits of Moore’s “law” – memory latency is now the main bottleneck for data warehouse workloads that perform joins. Your total query throughput is gated by the amount of DRAM lookups you can do per second. And that is a number that is going up VERY slowly with new releases of technology (and it is expensive to acquire powerful DRAM lookup capability). Even hash joins (which are much faster than loops) require a L3 cache miss for all but the most sized tables – and even the best CPU core you can buy for money can’t service many of those. Hence, massive joins trees (created by DV) are poison for your performance and they will remain so unless someone comes up with a way to make electricity move faster than the speed of light.“

Some comments about

The meta-data management of Data Vault

Here are some comments from different practitioners about the meta-data management of DV (Data Vault):

- Comments from Dmytro Andriychenko (https://www.linkedin.com/in/andriychenko/ )

Dmytro Andriychenko is the original author of the article. He writes:

“I tried to be impartial in my article, but it is obviously still clearly shown my (negative) attitude to it :-). I particularly like the “Data Hoarding” expression – indeed Data Vault appears to be an open invitation to practice the “garbage” principle :-). I guess if one is to practice Data Vault, then stringent development practices based on meta-data driven auto-generated code are required with careful analysis of what should be preserved and what should be left out. … Still I personally find it hard to justify all the overheads of the methodology.”

When you read the comments here above, you quickly realize that all these “data vault” practitioners are very passionate about the subject: They don’t hold and nuance their comments. Indeed, this “Data Vault” technology is so totally absurd and ridiculous that it’s very difficult to understand why any sane people would want to use it and, thus, it leads to some “inflammatory” negative comments. I realize now that, myself, I might also be too vehement about it! Sorry about that!

To summarize all these technical comments

- You should be completely insane or stupid to want to use the “data vault way” to do anything intelligent with your data…

- Data vault is garbage because it is:

- …terribly inefficient during the design of new data transformations: You cannot write yourself any SQL commands to create the required data transformations: You need to use an overly complex tool that will create for you some SQL commands that are unreadable monstrosities.

- …terribly inefficient at run-time: Your database will slow down terribly and you’ll never be able to manipulate any large volume of data.

- …terribly inefficient in terms of storage: The space required to store your data is tripled (as you need to keep at least 2 extra, unnecessary copies of all the data)

- …terribly inefficient in terms of meta data management: i.e. Data Vault appears to be an open invitation to practice the “garbage principle”: i.e. store all data indifferently, consume a lot of resources and disk space for nothing, document nothing, never ever use this “garbage” data.

- Data vault is really good for the business of “consultancy” companies for two main reasons:

- With “Data Vault”, consultancy companies can (easily) charge 5 days of consultancy work to do one join between 2 tables (what you normally do in 1 minute maximum).

- With “Data Vault”, consultancy companies can take your “data hostage”.Indeed, data vault generates an explosion of tables. To remind you the first comment from Dr. John Tunnicliffe: He gives an example where, originally, there was one table with 12 columns in the database. ..And after being “data vaulted”, the same table ended as 18 tables with more than 8 columns in each table (if you follow the DV methodology). ..And, obviously, the table’s names and the column’s names are so obscure, so that, only the “clever” consultants can actually extract anything out of the data, and *only* if they have the proper tool to be able to generate the SQL command required for the extraction. In a sense, this tool acts as a “key” to unlock your own database: if you don’t have access to this “key”, you can’t use anymore your own data. In other words, it’s not possible (or, at least, very difficult) to do anything “by yourself” with the data. This is another way to say that they are effectively forcing you to pay a “ransom” to access your own data because they are the only ones able to do anything with it.

…But the better situation (for the consultancy companies that want to take your data hostage) is when you store you “data vault” in a database on Amazon. You see, the Amazon configuration is overly & unnecessary complex by design: i.e. it requires many complex and intricate certifications before being able to do anything. So, if you combine (1) the obscure complexities of the “Data Vault” technique with (2) the existence of an undisclosed&hidden “key” to access your data (i.e. the tool required to generate these intricate SQL command) and (3) with the obscure complexities of the Amazon platform, you end up with a situation where only the external consultants can do anything with your data.

Avoid anything related to Data Vault

So, my final advice is the following: Avoid anything related to Data Vault. The only people that are gaining something from this flawed methodology are the consultants getting big bucks to implementing it.

Again, if your objective is to rationalize & improve the access to the data available in your company to allow your employee’s to take better decision, based on real numbers and real statistics extracted for your own data, then stay away from data vault. You should avoid using the “Data vault” technology because it renders access to data so complex and intricate that nobody, with the exception of a few selected “experts” (e.g. very expensive external consultants) can actually use the data. In other words, “Data vault” will effectively prevent the adoption of an analytical culture in your company. In opposition, an easy graphical data management tool, such as Anatella (that offers an easy “self-service” interface) is greatly facilitating the general adoption of an analytical culture in your company.

Frankly, after the initial meeting that we had one year ago, we all had this feeling that “Data Vault” is totally absurd and ridiculous (when we saw this SQL command that filled in 3-screens for practically nothing! ![]() ) and we all thought that nobody in their right mind would ever use it. What an abomination!

) and we all thought that nobody in their right mind would ever use it. What an abomination! ![]()

For us, the case was definitively closed. ..but, against all odds, the cupidity of some unethical consulting companies made it open again! ![]() That was definitely a surprise to me! This page is a reaction to this surprise. With this page, we really intended to help companies that lacks the information or the knowledge to understand that “Data Vault” is not good for them. Indeed, this “Data Vault” technology is so deeply and obviously flawed that we really felt that it was our obligation, as citizen of the world, to give you a big “Warning Message” against it. …Hope it helped!

That was definitely a surprise to me! This page is a reaction to this surprise. With this page, we really intended to help companies that lacks the information or the knowledge to understand that “Data Vault” is not good for them. Indeed, this “Data Vault” technology is so deeply and obviously flawed that we really felt that it was our obligation, as citizen of the world, to give you a big “Warning Message” against it. …Hope it helped! ![]()

Ahhh… what a good rant!

haha, didn’t know about this Data Vault trend really. It would seem to me as if they were pushing forward for using some incredibly advanced forms of hardware and software parallelism (that would be the only thing that would make me split a table into several ones)

I think I can sense that this one was written by Daniel (Haha)

Anyway, good read (even though quite lengthy)

Hi Juan, thanks for the feedback!!