NEVER WAIT ANYMORE

FOR A DATA TRANSFORMATION

FAST SORTING

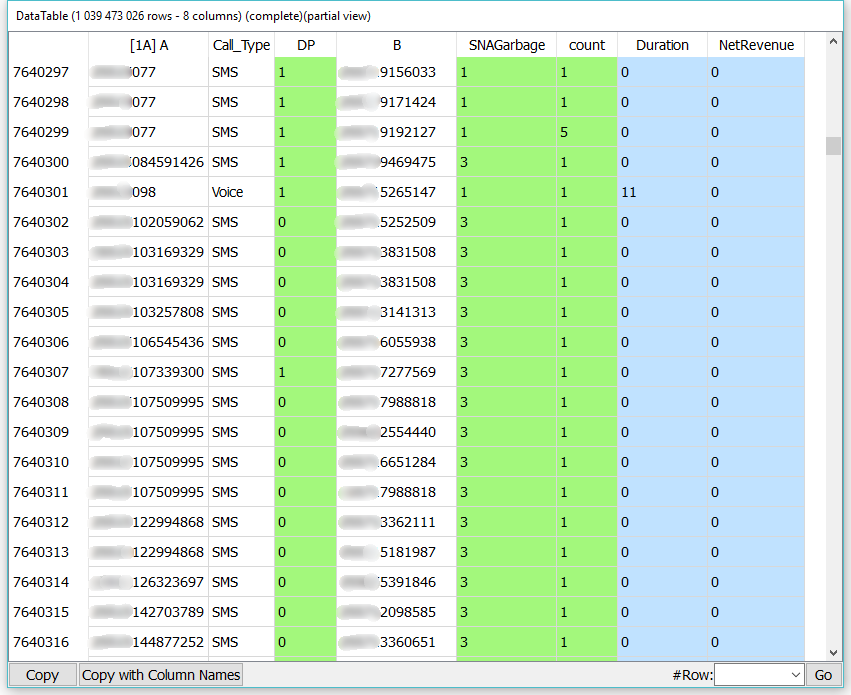

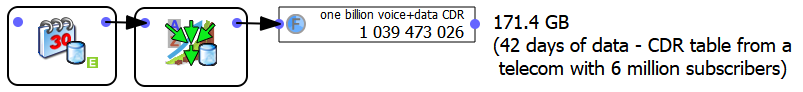

FOR A TELECOM

Anatella sorts a large CDR (Call Data Record) table with 1 billion rows and 8 columns. This CDR table 171 GB in text file is sorted in 99 seconds using less than 300MB of RAM.

1000million rows

300MB of ram

99seconds

More...

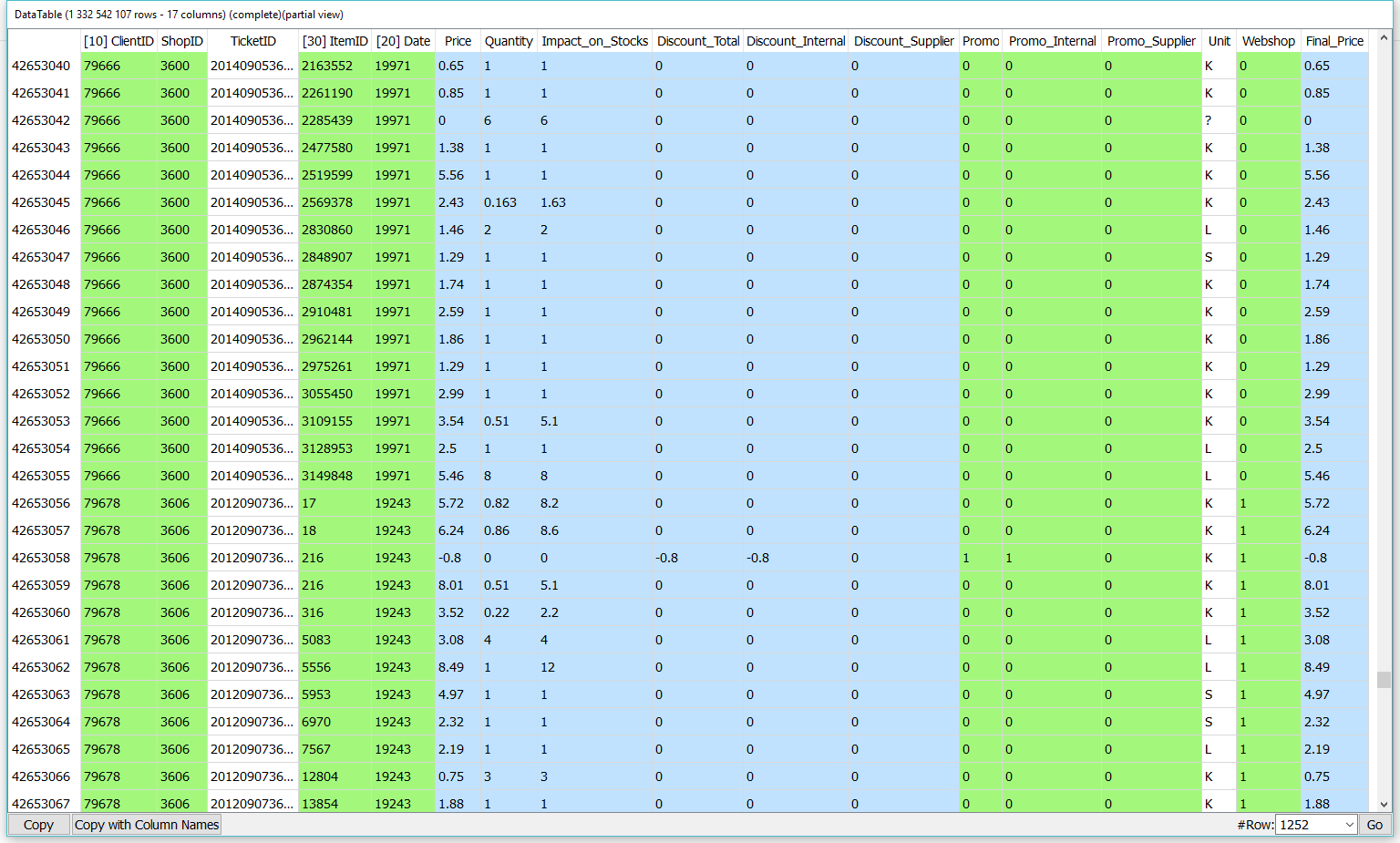

1300million rows

150MB of ram

70seconds

CALCULATION OF AGGREGATES

FOR A SUPERMARKET

From the ticket table (1.3 billion lines and 17 columns), Anatella calculates the following aggregate: the percentage of shopping on the web. This KPI is completed in 70 seconds using less than 50MB of RAM.

More...

NOTICE

All examples mentionned on this page are running on this laptop: